Autonomous (Self-Driving) Vehicles (& Ships) (& Planes)

Autonomous (Self-Driving) Vehicles (& Ships) (& Planes)

Lots of interesting issues here. Autonomous vehicles seem certain to be much safer (on average) than those controlled by humans. But their successful introduction requires successful advances to be made on many fronts. It also depends upon the satisfactory allocation of risk.

It has already taken a long time for the technology to become truly road-ready. I remember, in around 1993, being driven in a technology-demonstrating Jaguar on a fairly quiet French motorway. The car was able to stay in lane by tracking the two white lane markers, and could adjust its distance from the vehicle in front.

Autonomous vehicles are classified by six levels, from zero to five. As of 2019, industry experts say that a Level 4 autonomous car that can completely drive itself under certain circumstances will come to market by 2024. If true, that would make the formulation of new rules for cities and citizens imperative. The same experts say that fully autonomous, Level 5, vehicles will probably not be available until 2029 at the earliest.

Elliott Sclar argued, rather persuasively, that ...

All transport is interaction between moving vessel and travel medium. Land-based modes require major capital investments in suitable physical infrastructure and administrative co-ordination between vehicle and travel medium. To date it has been implicitly assumed that existing configurations of streets, roads, traffic signalling, sidewalks and so on remain about as they are; only the physical driver disappears. It is important to remember that the present configurations evolved assuming human-operated rolling stock. The effectiveness, efficiency and safety of the new mode will greatly depend upon the degree to which the infrastructure is modified to accommodate its technology. All the adjustment cannot and will not be done in the vehicle.

This seems likely to be particularly true in major British cities where cautious autonomous vehicles will find it very difficult to understand when it is safe to change lane (think Hyde Park Corner!) or slip out of a side road into a busy High Street. Indeed, driverless cars will need to be programmed - on occasion - to mount the pavement so as to drive round a broken down vehicle or make way for an oncoming car or an emergency vehicle. And they will need to be able to edge their way through pedestrians after major sports events, for instance. Programming such behaviour will be far from easy.

Indeed, the problem may be worse than that. In New York and London, the unwritten rule is plain: Cross the street whenever and wherever — just don’t get hit. But if pedestrians know they’ll never be run over, jaywalking could explode, grinding traffic to a halt. Will we need fences along every pavement? Or hyper-strict anti-jaywalking legislation?

A more detailed discussion of this subject may be found in John Adams' blog The pathway to driverless cars and the sacred cow problem together with his presentation on the same subject and an interesting Grants article on the financial aspects of the problem. ("It’s the human response to the plan that usually trips up the planners.")

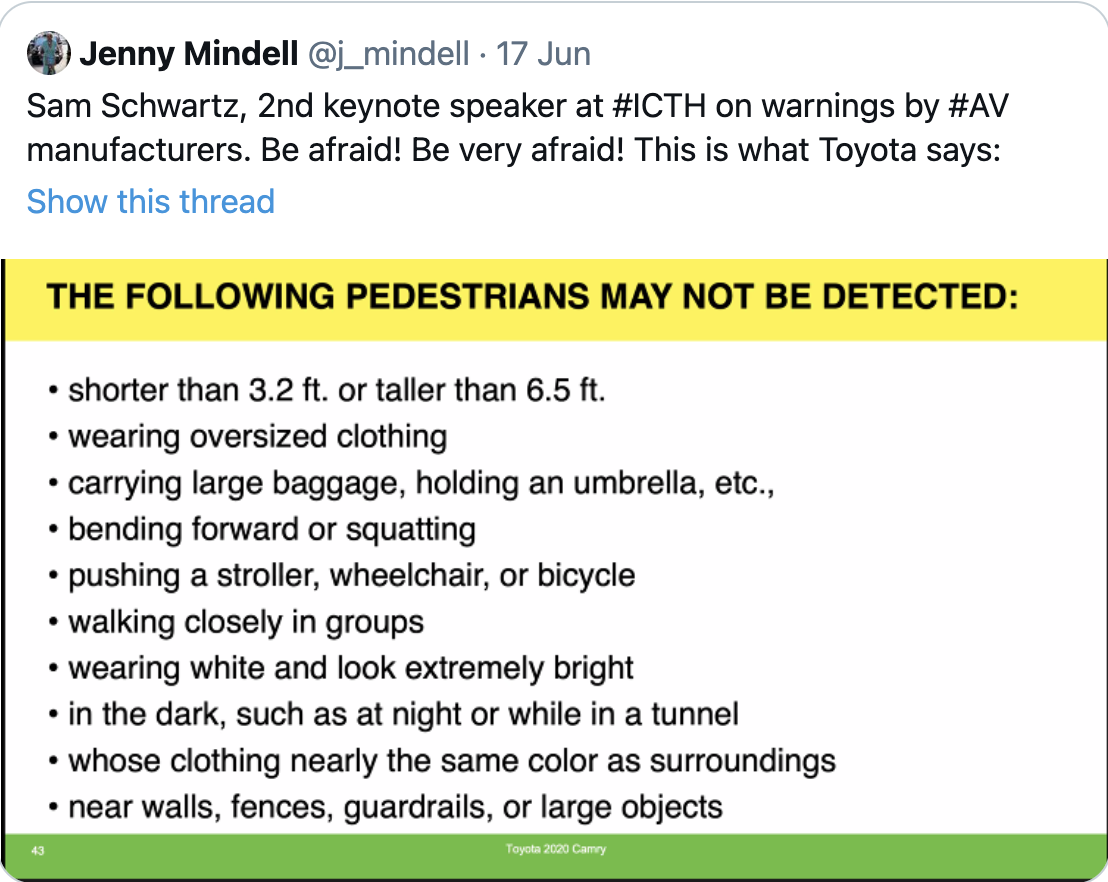

Toyota seem to appreciate the scale of some of the problems that they face:

And/or will we hold autonomous vehicles to higher standards? For instance:

- Should the algorithms provide that the life of the vehicle's driver should be sacrificed if that is necessary to avoid killing, say, several pedestrians - or just one - who was jaywalking - but only five years old?

- Should driverless cars should be fitted with a dashboard dial allowing you to choose whether the lives of the car's occupants should always outweigh those of others, or whether the car should always sacrifice its passengers.

Research (such as the Moral Machine Experiment) demonstrates that people will generally say that they prefer to spare a young life in preference to an older one, but:

- It would often be impossible quickly to identify the characteristics of a potential victim.

- stated preferences varied somewhat between different cultures. (Confucian societies showed lower preference for young people than their Western counterparts.)

- And stated preferences may not reflect our real life reactions. Would you (would everyone?) really choose to swerve and kill yourself rather than a child who had run out in front of you?

- It might be better to require those that create the risk (that is those in the vehicle) always to take the consequences - so that a car with failed brakes would preferentially harm its occupants over pedestrians and cyclists.

- Or maybe cars should always preferentially harm its occupants over pedestrians etc.?

But vehicle manufacturers could be required to design safety into their products:- soft bumpers say.

The SMMT in 2019 published a detailed review of current technology ( Connected and Autonomous Vehicles ) - and a brave peer into the future. It will be interesting to see how accurate their predictions turn out to be.

Legal Liability

The Law Commission recommended in December 2020 that the 'user in charge' of a self-driving cars should not be held criminally responsible for careless etc. driving when self-driving mode is activated. Instead, responsibility should fall on the manufacturer or developer of the vehicle. But the person responsible for the vehicle would still need to stay sober in a=case they need to take control. .

Insurance

If vehicles are programmed to follow the rules of the road, and are never driven by tired or inattentive or drunk drivers, that will instantly save loads of lives.

The government announced in November 2017 that self-driving cars would be in use in the UK by 2021, and that insurers would be required to cover injuries to all parties whether or not a human driver had intervened before his or her vehicle was involved in a collision. This implies a fundamental shift in road traffic law away from personal liability to product liability. But which manufacturer will be held liable - that of the hardware (the car) or the software?

I have heard a suggestion that there should be a system of 'no fault' insurance but I suspect that this would suffer from the free rider problem. Why would any manufacturer work hard to add yet more safety to their systems if the risk was shared collectively?

Whatever the outcome, it might well become a template for other high tech insurance schemes. Who, for instance, is to be held liable when a robot, deployed by my dentist to fix my teeth, goes wrong?

Follow this link to read about the psychology involved in our attitude to Risk and Regulation.

Autonomous ships

... may be on the way, too, though the main aim will be to reduce the number of marine accidents, 80% of which are currently caused by human error. But the savings that can be achieved by removing the final few crew members will be pretty small, and could lead to more piracy. It was therefore interesting to read that 79% of maritime professionals expected the first autonomous ships to arrive by 2028, and 14% by 2038 - with the remainder thinking it would take longer or would never happen. The same figures for expectation that autonomous ships would be commonly deployed were 16%, 56 % and 28% respectively. We shall see !!

I suspect we shall see autonomous aircraft rather sooner than that - though it is currently hard to imagine a plane without someone up front.